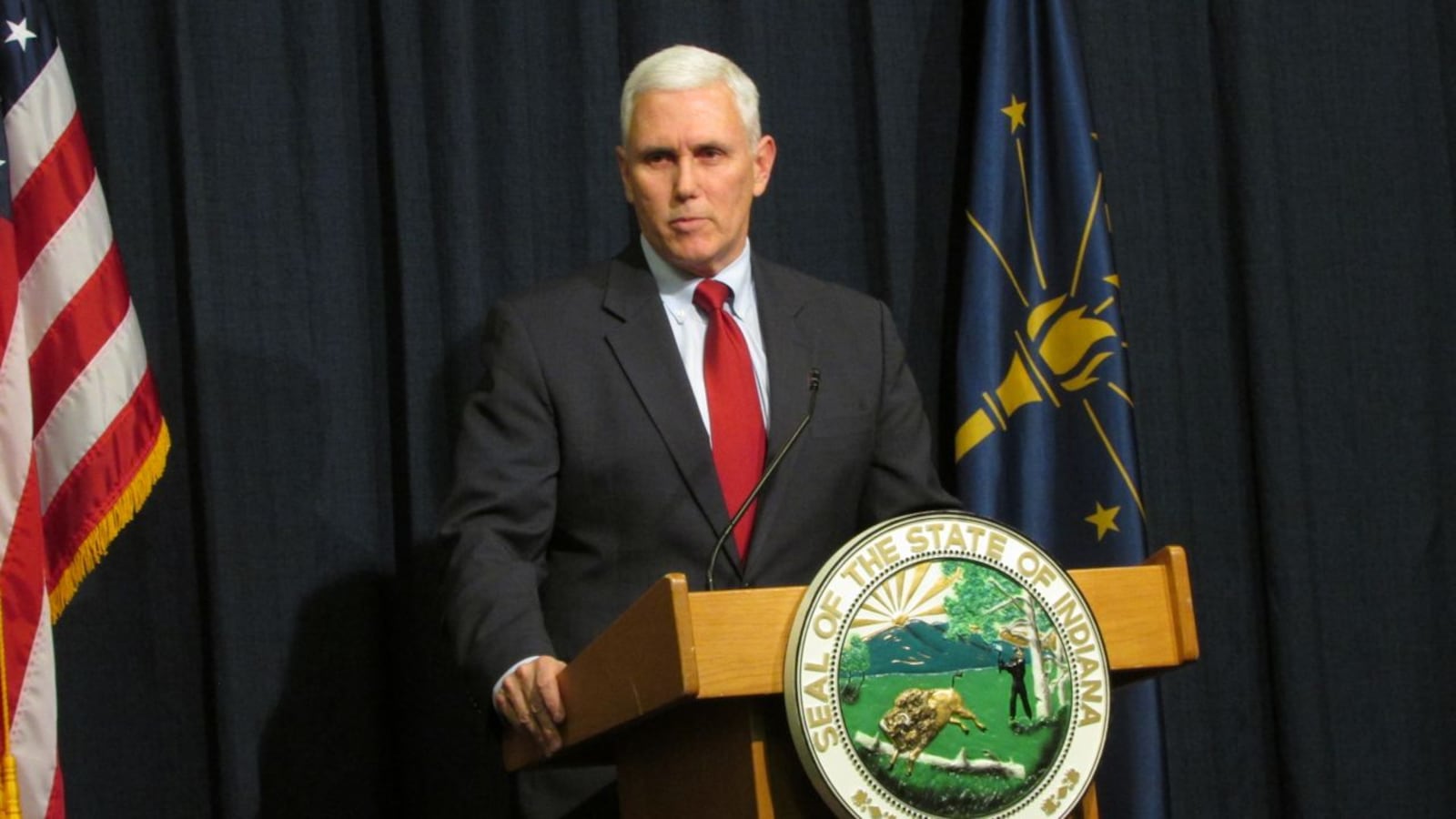

Gov. Mike Pence signed an executive order today aimed at shortening Indiana’s ISTEP test, an action that follows days of heated conversations about how the 2015 test is almost twice as long as last year’s exam.

But it is not clear his order can be executed without cooperation from state Superintendent Glenda Ritz.

“Doubling the length of the ISTEP Plus test is unacceptable, and I won’t stand for it,” Pence said today. “Doubling the testing time for our kids is a hardship on them, it’s a hardship on families, it’s a hardship on our teachers, and this is a moment that calls for decisive action.”

Pence said recommendations would be presented to the Indiana State Board of Education and the Indiana Department of Education following a review by “national testing experts” he said would be named later for how the test could be cut down.

The education department has a contract with California-based CTB/McGraw-Hill, and is the only state agency that can write and change the test, Pence acknowledged. However, he suggested “unnecessary” reading and social studies sections could be removed, which he said would significantly decrease test time.

But Daniel Altman, spokesman for Glenda Ritz and the department, said CTB/McGraw-Hill wrote the test with federal requirements in mind and there aren’t superfluous sections that can be cut. He also said the department was notified of the executive order just minutes before the governor’s announcement.

“Yes, the test is too long,” Altman said. “However, those are the requirements the federal government has put on us and the requirements that the House and the Senate want tested as well, and so the department has to comply with that.”

Ritz told Pence in a meeting last week that Indiana should suspend A-to-F grades to give teachers and students a year to adjust to the higher expectations of the new ISTEP.

Pence made it clear he opposed that suggestion.

“You don’t throw out the grades, you fix the test,” Pence said. “And that’s what we’re going to do. And we’re going to have time to do it, but we needed to take action today.”

Indiana has an agreement with the U.S. Department of Education giving the state relief — or a waiver — from some sanctions under federal No Child Left Behind law. In return, the state promised to convert to more rigorous academic standards that would better prepare student for college and careers. Like other states, Indiana was on track to convert to higher standards, and new tests to measure them, in 2015.

But Pence, the legislature and Ritz all joined last year to change direction, dropping Indiana’s plan to follow Common Core standards along with 45 other states. States following Common Core created tests to be used with those standards.

Going its own way put Indiana on a short timeline to adopt new state-created standards — which it did last April — and tests that fit them.

This year’s ISTEP test is longer for a few reasons, the department of education said in documents released on its website.

First, the test itself is harder and might take more time. The 2015 test also has extra questions that don’t count but are needed to help build the 2016 test — a complete overhaul coming next year that the state is still developing.

For 2015, ISTEP also was modified to include new “technology enhanced” questions, which supposedly better measure whether students meet the new standards that attempt to better ready them for college and careers.

Concerns began when parents, teachers and policymakers last week saw documents from the department saying students would spend almost twice as much time taking state standardized tests this year than in years past.

Pence said adopting new standards doesn’t mean test length should double.

“I believe that … the test should roughly be the same burden and the same length as it was before,” Pence said. “Just because the standards are higher and tougher, the questions might be higher and tougher, but it doesn’t mean there need to be more of them.”

This year’s test is slated to take students up to 12-and-a-half hours over several days. Last year’s test took about six hours to complete. Each grade’s total testing time is different, with eighth-graders taking the shortest test at 11 hours and 15 minutes and third-graders taking the longest test at 12-and-a-half hours.

Teachers have been wary of the new ISTEP since the start of the school year because of its new computerized format. They’ve raised questions about how much more students must do on the screen, rather than just selecting multiple choice questions as they did in the past.

But the first part of the test, which will begin Feb. 25, Altman said, is largely writing-based, with kids responding to short reading passages and solving math problems, mostly on paper. The new computerized part is scheduled to begin in late April.