Chalkbeat published a detailed story about the website GreatSchools and how their school ratings correlate with students’ race and income. The piece was based on an original data analysis. Here’s how we did it.

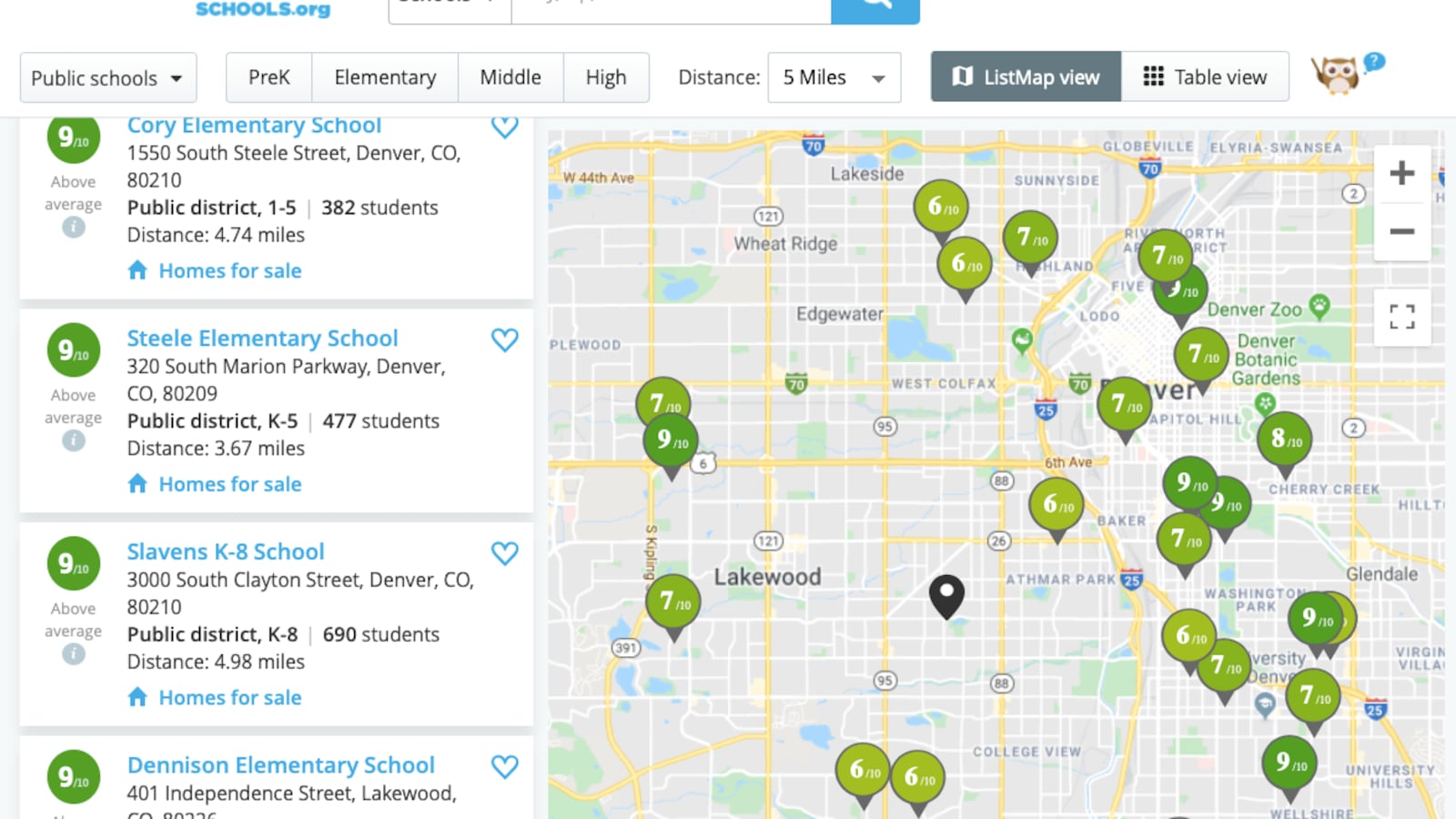

Chalkbeat collected data from the GreatSchools website for several cities and a number of their suburbs as defined by the Census Bureau’s metropolitan statistical area. The data, pulled in August 2019, included each public elementary and middle school’s GreatSchools ratings on a scale of 1-10, the share of students who qualify for free or reduced-priced lunch (a proxy for poverty), and the share of students who are black or Hispanic.

Then we examined whether there was a relationship between schools’ demographics and their ratings. We did that in two ways.

One was to create a correlation coefficient, which is a measure of how two variables are related to each other. Correlation coefficients range from -1 to 1.

A 0 indicates no relationship at all — complete randomness. A 1 (or -1) indicates a perfect positive (or negative) relationship, meaning the two variables move in lockstep.

Statisticians sometimes advise that a coefficient of 0.5 (or -0.5) is a strong relationship, though this depends on the context.

Using this approach, we found a fairly strong relationship between school ratings and a school’s share of low-income students, as well as its share of black and Hispanic students. In both cases, the correlation coefficient ranged from about -0.6 to -0.8, depending on the metro area. That means that one can use demographics to predict a school’s rating.

To make these findings easier to interpret, we divided schools in each area into five groups based on the proportion of their students who qualify for free or reduced-price lunch. We divided schools the same way by their share of black and Hispanic students. (Keep in mind that each bucket doesn’t not necessarily have the same number of schools.)

Then we calculated the average GreatSchools rating for schools in each group. We also figured out the range of ratings that account for the middle 50% of the schools in each category.

The difference in average ratings between schools in the first groups (with the fewest low-income students and the fewest black and Hispanic students) and last groups was large — between 4 and 6 points on a 10-point scale, depending on the city.

We also looked at what share of schools that serve predominantly low-income black and Hispanic students — more than 80% each — scored above average (higher than a 7) on GreatSchools ratings. In general, very few did. But that number went up substantially when we just looked at the growth component of GreatSchools rating.

Here are answers to a few other common questions.

When and how did you collect this data?

In August 2019, we took ratings data from GreatSchools’ website for all public elementary and middle schools (with a rating) in each focus city and some surrounding suburbs. Each school’s demographic data was also taken directly from GreatSchools’ site.

In a handful of cases, we found evidence that a school’s share of students who qualify for free and reduced-price lunch was off — sometimes implausibly low — likely reflecting errors in state reporting or known problems with using this measure as a proxy for poverty. In New York City, a number of high-achieving charter schools did not report the percent of low-income students. But because there were only a handful of such examples, we’re confident that this does not change the general thrust of the results.

How did you choose which cities to focus on?

Our aim was to include several large cities from across the country with a range of demographics. We also prioritized cities with a Chalkbeat bureau, and looked to include places both with and without state-provided growth scores.

Why look at cities and suburbs as opposed to entire states?

GreatSchools took issue with Chalkbeat’s focus on metro areas, suggesting that that a statewide analysis would show a less powerful connection between schools’ ratings and demographics. Chalkbeat focused on metro areas because families tend to choose schools and homes near a given city or town rather than across an entire state.

Still, to test GreatSchools’ concern, Chalkbeat analyzed data on elementary schools from six states where GreatSchools provided data — Arizona, California, Colorado, Illinois, New York, and Tennessee — and compared it to data from a metro area in the same state.

In all six states, there was still a moderate to strong negative relationship between GreatSchools’ scores and elementary schools’ share of low-income students.

In three states (California, Colorado, and Illinois), there was virtually no change when looking statewide as opposed to the metro areas (San Francisco, Denver, and Chicago, respectively). In the three other states we did see a small decline when looking across the state — the connection was about one tenth of a point smaller. For instance, the correlation between ratings and a school’s share of low-income students in the Phoenix area was -0.72, but for Arizona as a whole it was -0.6.

Why did you just look at elementary and middle schools?

We focused on schools in earlier grades because that is generally when families make residential decisions with schools in mind. GreatSchools’ formula for rating high schools is also quite different from its formula for elementary and middle schools.

Our analysis of high schools in six states shows that a similar — though generally significantly less pronounced — relationship with demographics exists in those schools, too.

What about other third-party school rating sites like Niche? Why focus on GreatSchools?

GreatSchools ratings are present on many major real estate websites, including Zillow, Redfin, and Realtor.com. A preliminary study released in late 2018 also looked specifically at GreatSchools ratings, and found that economic and racial residential segregation increased as a result of their introduction.

In some areas, district- or state-created ratings may carry as much or more weight than GreatSchools ratings do. But we focused on GreatSchools because of its connection to real estate. GreatSchools says it had 43 million unique visitors to its site in 2018; Zillow and its affiliated sites count more than 150 million unique visitors per month. By comparison, Niche reports 22 million annual visitors.

Does the analysis prove that GreatSchools ratings increase school segregation?

It raises that possibility, but doesn’t prove it. That would require careful research that does not currently exist. One preliminary study did find that economic and racial residential segregation increased as a result of the introduction of GreatSchools ratings. This study was done prior to the site’s 2017 ratings shift, which slightly reduced the ratings’ relationship with student demographics.

GreatSchools rejects the notion that its ratings contribute to segregation. “On the contrary, we believe that information drives equity,” said its CEO Jon Deane. “There are real issues at play here, but keeping parents informed, so that they can act on behalf of their children, isn’t the issue.”

This question aside, it’s important to remember that entrenched residential and school segregation is driven by a myriad of factors, including past government policies that restricted who can live where and who can accumulate wealth. These, and other factors, long predate GreatSchools.

Do your findings show that schools don’t matter much for student achievement — that it’s all about demographics?

No. GreatSchools ratings are quite correlated with demographics, but there is still a range of scores even when looking at schools with similar populations.

Research also shows that school and teacher quality make a substantial difference for students. School spending, racial integration, and certain charter networks — among many other policy interventions — have all been linked to better academic outcomes.

Notably, the growth scores that GreatSchools uses as a subcomponent of its ratings are much less tied to demographics than the overall ratings because compare how much a student improves relative to students at a similar level. That helps schools that have students who come in far behind.

Is the relationship between school ratings and race really just a reflection of poverty?

No. Racial demographics independently predict school ratings, without directly considering poverty. That aligns with other evidence on how race and racism shape academic success in America.

In general, poverty and race are each equally predictive of GreatSchools ratings. This suggests that schools that serve a high share of both low-income students and black and Hispanic students are doubly disadvantaged under its rating system.

Doesn’t this just show that schools serving low-income and black and Hispanic students are less effective?

That is likely part of what’s going on, but it’s not the whole story.

GreatSchools’ own data shows that when using academic growth scores — which researchers generally say is a better judge of school quality — the relationship with demographics is substantially smaller than with GreatSchools’ overall rating.

What about the schools that buck the trend?

There are definitely some schools that don’t fit the overarching trend. For instance, in New York City a good number of charter schools, serving largely low-income black and Hispanic students, post high ratings — particularly Success Academy, which is well known for its high test scores.

Elsewhere, some virtual charter schools serve relatively few poor students but still produce low test scores.

Other outlier schools serve distinct groups of students. Rise Learning Center, for example, an Indianapolis school that scored a 1 but has few low-income students, serves students with disabilities.

Finally, a handful of outliers appear to have inaccurate demographic data, likely because it was misreported or because of problems with using free and reduced price lunch-eligibility as a proxy for poverty.

Aren’t families just looking for schools with more high-achieving students? Don’t these ratings show exactly that?

There’s some evidence that’s true. One study in New York City found that when selecting high schools, the key factor was the prior achievement of other students in the school. There’s also evidence that whiter, affluent families seek out schools with few low-income, black, and Hispanic students. That is also where GreatSchools ratings are directing them.

In that sense, the ratings may be providing what some families desire, but this approach could also exacerbate segregation. GreatSchools does not award points for schools that are racially or economically diverse, which has been linked to benefits for students.

There is some evidence that, when presented with certain data, families can be open to schools they might not have otherwise considered. In one experiment, parents, including white and affluent ones, were more likely to select a diverse school district when given data on academic growth (as opposed to just proficiency or demographics). Another study showed that white families in New York City were more likely to move into low-income Hispanic neighborhoods where teachers were more effective, after a public release of teacher performance data.

How did GreatSchools’ new rating criteria change things?

GreatSchools changed its ratings system in 2017 to include other measures beyond proficiency scores. For elementary and middle school that included a growth rating and an “equity” scores, which are both based on test scores.

We didn’t have access to older data. To figure out whether this change reduced the connection between ratings and demographics, we focused on the proficiency component of the current score. This essentially allowed us to examine what a school’s rating would have been under the old system.

This analysis suggested that indeed the relationship with demographics has declined under the new system. In some places, the correlation coefficient fell by over a tenth of a point; in other places it changed only slightly. For instance, in the Denver area the correlation with percent low-income would have been -0.84 under the old system; under the current one it’s -0.73. In the Chicago area, it fell from -0.8 to -0.75.

In no cases did the correlation dramatically change.

That’s likely because the biggest factor in GreatSchools ratings remains proficiency. Growth is correlated less with demographics, but it also gets less weight in GreatSchools’ rating formula, usually accounting for about 25%.

In some places — including California — the state does not provide a growth score, forcing GreatSchools to calculate its own score based on less granular data. This proxy growth rating is strongly correlated with demographics, similar to proficiency scores, according to our analysis.