Earlier this month, as Mayor Eric Adams doubled down on the importance of keeping schools open despite a wave of COVID infections, he pointed to “frightening” evidence that learning has stalled during the pandemic.

“We’ve lost two years of education,” he said. “The fallout is unbelievable.”

The mayor didn’t share the numbers that alarmed him, nor did the education department disclose them despite repeated requests.

Three school years into the pandemic, the public still knows very little about how students are faring academically in the nation’s largest school district — an early epicenter of the pandemic also hit hard by the omicron wave.

The education department’s attempt to get a handle on that has included spending $36 million on a battery of assessments meant to measure what students know and track their progress after nearly two years of massively interrupted learning time. Schools are now in the midst of the second round of these tests, after administering an initial round in the fall.

Education department spokesperson Sarah Casasnovas insisted that test results were helpful in addressing “learning gaps.”

“This data supports student progress throughout the year as educators identify where students need help, opportunities for enrichment, when to adjust classroom instruction, and provide individualized support as needed,” she wrote.

But some educators say the time-consuming tests have provided little useful information, and none of the data has been shared publicly.

Classroom concerns

In kindergarten through second grade, the education department has recommended that schools use the literacy screening tool created by the nonprofit Acadience.

The preferred math tests for all grades are created by i-Ready, from the for-profit Curriculum Associates, or the Measures of Academic Progress (MAP) Growth assessment, created by the nonprofit NWEA. Those assessments are also recommended for older students for English.

The assessments have not been popular with the elementary school students in Giuliana Reitzfeld’s Brooklyn classroom.

Her students have come to dread logging onto i-Ready, she said, going so far as to draw tombstones for characters from the program with the letters “RIP.” Her students even once held a mock funeral service at recess.

“It’s very apparent that they’re not enjoying it,” Reitzfeld said.

Plenty of schools have relied on tests, given regularly, to help track what students know. Before the education department mandated them, at least 1,200 out of some 1,600 schools already used some form of periodic assessments, and at least 400 were already using MAP.

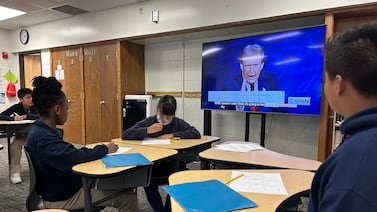

High school English teacher Ted Dickhudt had previously opted to assess his students throughout the year to gauge their reading skills. With that information, he knew which students he should spend extra time with, to help them eventually pass the culminating state Regents exams.

This year, however, his school had to choose a test from the city’s limited menu of options, instead of the programs he has come to rely on. Leaders at the Washington Heights school chose to administer the Map Growth tests.

The new tests were so logistically and technically difficult to administer, Dickhudt said that troubleshooting felt like playing tech whackamole. It sparked him to file a union grievance, his first in 15 years of teaching. He also took issue that texts were not culturally relevant to his students, with most passages written by white men.

Dickhudt said the assessments didn’t tell him anything he didn’t already know: His students have fallen behind. Historically, his 10th graders came to him with a reading level expected of a student in the middle of eighth grade. This year, his students scored the same as a seventh grader just starting off the school year.

“That data was striking, and to MAP Growth’s credit, the data kind of made sense, right? They missed a year and a half of school, and grade levels dropped by a grade and a half,” he said.

But when he tried to drill down into what students were struggling with — were they reading too slowly, having trouble pinning down the main idea, or did vocabulary stump them? — the test reports did not provide the same level of detail he’s used to, Dickhudt said. It was hard for him to use the information to guide his teaching.

“For me as a teacher, these kinds of assessments can be very helpful, but not this one. And not the way the [education department] is mandating it,” he said.

Lack of transparency

Nationally, the toll of the pandemic on learning has been grim. Data has shown that progress stalled in both reading and math, especially among younger students. Black, Latino, and low-income students have been hardest hit. In neighboring Newark, just 9% of students met state expectations in math last year, and 11% in reading.

There’s very little public data on where students stand in New York City. State tests were canceled in 2020, and when the third through eighth grade exams resumed last spring, families had to opt their children in to take them. Only one in five students did.

Implementing periodic assessments reflected a long-term goal of former Chancellor Richard Carranza. He believed that giving the same tests throughout the school system would provide clearer information about how students are doing, and where to invest the education department’s resources.

Adams and his chancellor, David Banks, have said little in their first month about the academic recovery plans created by the de Blasio administration. Banks has already removed the department’s previous chief academic officer, appointing former Bronx principal and teacher Carolyne Quintana as deputy chancellor of teaching and learning. The education department did not respond to requests to interview Quintana.

Even if the city’s data were shared more widely, academics cautioned they might not be that useful for understanding the pandemic’s impacts on student learning. Some schools administered the tests for the first time this year, meaning there might not be data to compare results. Schools were also given a menu of tests to choose from, which could further complicate comparisons.

Some education experts said the types of assessments being given simply aren’t that reliable, and were wary of releasing data that could be used to unfairly judge schools.

“I understand the policy drive to ‘know,’ and that it typically reflects a legitimate focus on helping students,” said José Felipe Martínez, an associate professor at UCLA who studies assessments. “But I think more thought should be given to whether excessive focus on ‘knowing’ or ‘monitoring progress’ can in effect become counterproductive to said progress.”

James Kemple, executive director of the Research Alliance for New York City Schools, said the results could be helpful in making decisions in individual classrooms and schools about how to deploy resources, such as extra tutoring for specific students – even while cautioning that the data should be used “very, very carefully.”

How the data is (or isn’t) used

On the ground, educators say they were struggling to use the data in meaningful ways, particularly if it’s their first time using the specific assessments mandated by the education department.

One Brooklyn assistant principal, who asked to remain anonymous, said the only assistance given so far has been workshops on how to pull data reports. It was more technical than pedagogical.

Bobson Wong, a math teacher in Queens, said the same was true for him. Though his department spent some time looking at student scores together, he said the reports generated don’t provide a level of detail that’s useful for informing his instruction. To get more granular information about which skills students should spend time practicing, he relies on other computer programs that students use to complete homework.

Wong was frustrated that his class was already behind on content, and had lost even more class time to take the tests. Some students took three class periods to complete them.

“The social and emotional trauma these kids are going through is really overpowering and it’s hard for me to teach students content under these circumstances,” he said. “We can’t afford to spend more days on testing, to give us information that we already know.”