Sign up for Chalkbeat’s free weekly newsletter to keep up with how education is changing across the U.S.

In the small world of education research, the Stanford-based institute CREDO is a big name.

The organization has produced a series of much-cited, oft-debated studies on charter school performance since 2009.

Its latest research, released in June, concluded that charter schools outperform district schools on both reading and math exams. The results have drawn significant attention: The Wall Street Journal editorial board, for instance claimed the findings are “unequivocal” and show that charter schools are “blowing away their traditional school competition in student performance.”

The study is likely to be a key data point for years to come in continued policy debates over charter schools. But are the results as conclusive as the Journal and others have suggested? Not quite.

The research provides credible evidence that charter schools now have a test-score edge over district schools, although the advantage is small. But CREDO’s methods — which other researchers say have significant limitations — mean the conclusions should be viewed with some caution. Moreover, CREDO’s description of “gap-busting” charter schools may be widely misinterpreted.

CREDO: Charter schools have small performance edge

CREDO researchers draw on a vast swath of data across 29 states plus Washington D.C. to compare students’ academic growth in charter and district schools from the school years 2014-15 to 2018-19. CREDO concludes that achievement growth is, on average, higher in charter schools.

How much higher? Charter schools add 16 days of learning in reading and six days in math, CREDO says. This “days of learning” metric is controversial among researchers, though, and hard to interpret.

Here’s another way of thinking about the same results: CREDO found that attending a charter school for one year would raise the average student’s math scores from the 50th percentile to the 50.4 percentile and reading scores to the 51st percentile. By conventional research standards and common sense, these impacts are small.

“Generally, those aren’t seen as big effects,” said Ron Zimmer, a professor at the University of Kentucky who has studied charter schools. “They’re modest.”

That said, moving the needle on educational achievement even slightly is challenging, and these effects apply across a large swath of students who attended charter schools.

The charter effect varies widely across the U.S.

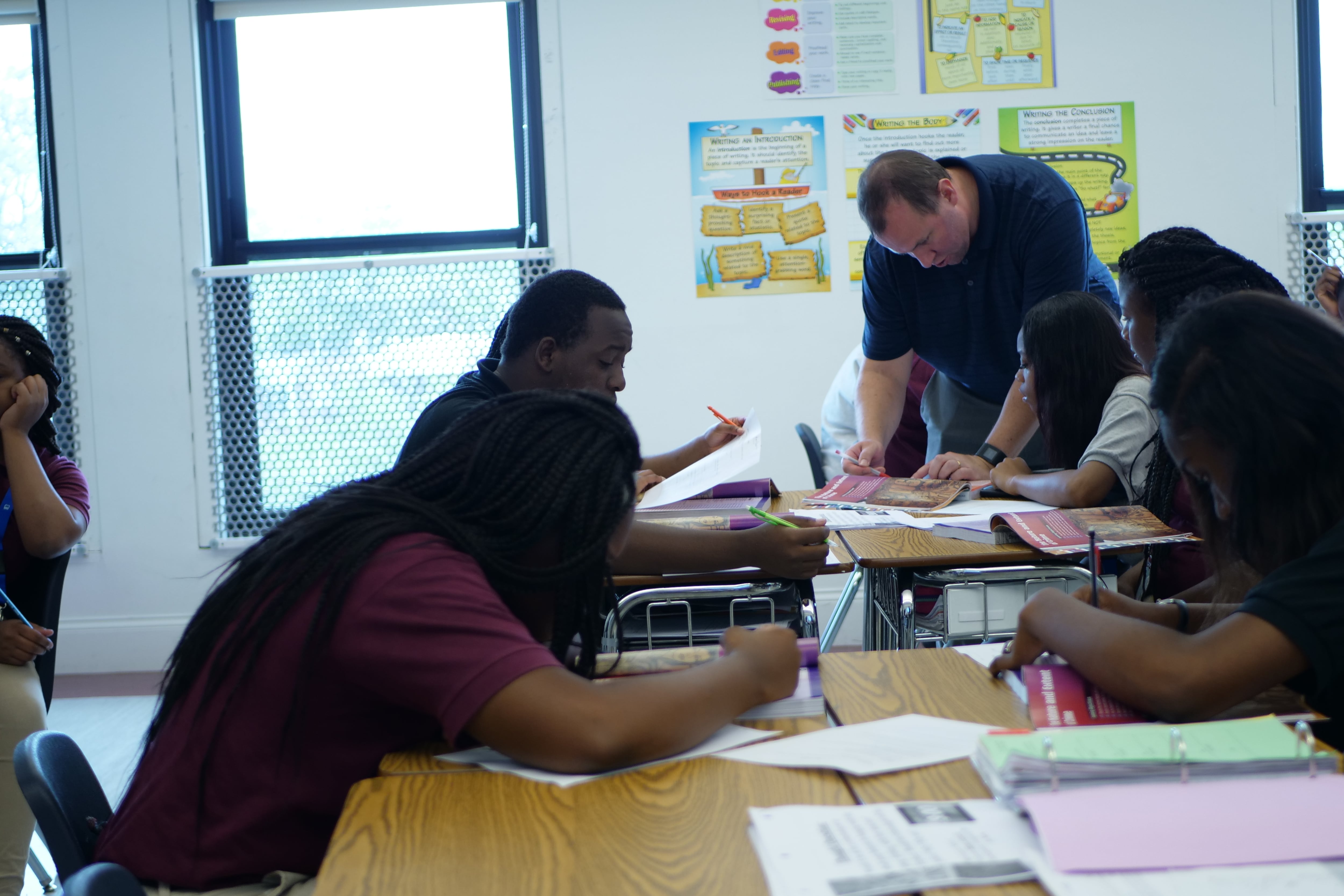

Generally, charter schools in the Northeast, including New York, Massachusetts, and Rhode Island, posted larger test scores gains, according to CREDO. Charter networks outperformed stand-alone schools. Some of these networks improved test scores quite substantially, which is consistent with prior research looking at so-called “no excuses” charter schools, such as KIPP.

Overall, Black, Hispanic, and low-income students seemed to benefit more from attending a charter school. Here, the size of improvement might be described as small to moderate.

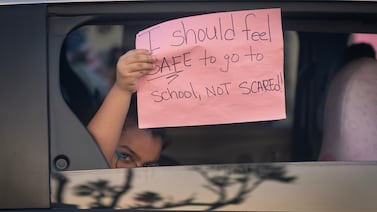

On the other hand, virtual charter schools had large negative effects, according to CREDO. Notably, since the pandemic these schools have expanded substantially. (CREDO’s data did not include any post-pandemic years.) Students with disabilities also appeared to perform worse in charter schools than in district schools.

CREDO’s methods come with important caveats

CREDO reaches its conclusions by matching charter school students with one or more “virtual twins” from a nearby district school. The “twins” are other students who have a similar set of characteristics, including test scores and free-or-reduced price lunch status (a proxy for family income). Then the researchers compare test score growth across millions of students in charter schools versus their virtual twins in district schools.

CREDO’s methods are a serious attempt to understand the effects of charter schools, but this strategy has limitations that are well-known among researchers. The basic problem is that the “virtual twin” approach does not guarantee a truly apples-to-apples comparison.

For instance, CREDO researchers compare two students who both have a disability — but those students may have very different types of disabilities. CREDO also cannot directly account for numerous other factors such as student or parent motivation that may lead to enrollment in charter schools.

These methods may be particularly problematic for examining students in unusual situations, such as those who opt for virtual schools because of personal challenges like bullying or illness. (Another problem is that CREDO has to exclude one in five charter students because they can’t find a suitable “virtual twin.” We don’t know if those students would shift the overall findings.)

Macke Raymond, the director of CREDO, says she is confident in the center’s findings but acknowledges that the methods are constrained by the data.

”There is no way with the amount of data that is available to researchers that we can measure every single possible dimension of all students and their backgrounds,” she said.

CREDO’s prior analysis suggests potential for small bias in results

No research method is perfect, so it is common for researchers to subject their conclusions to a battery of statistical tests to confirm the results.

CREDO did not do this in its most recent study. Instead it features an appendix from a 2013 study that compared findings from its main “virtual twin” method to those from a different, commonly used statistical approach. CREDO showed that the results from these two methods were not far off from each other.

But they were not identical. The CREDO researchers found in 2013 that charter schools had slightly worse results under the alternative method — by about 12 days of learning in math, to use the study’s metric. Again, this difference was small, but a shift of 12 days of learning would be enough to flip the recent math results from slightly positive to slightly negative.

James L. Woodworth, a researcher at CREDO, acknowledged this point, but said the alternative method was not necessarily preferable to the main model. CREDO also points to analyses by other researchers who have shown that its findings are fairly close to those of other methods. As for not doing further checks in the most recent study, Woodworth said, “We felt we had done our due diligence.”

Zimmer, the University of Kentucky researcher, says there is no perfect way to study the effects of charter schools and that CREDO’s approach is defensible. But he said the study would have benefitted from additional tests to support its results.

“It sure would be nice to say, here’s our model and here’s what we’re relying on, and we also checked it in other ways to see if it came to similar substantive conclusions,” he said.

CREDO’s description of ‘gap-busting’ schools may be misunderstood

One particularly evocative conclusion from CREDO’s latest study is its description of “gap-busting” or “gap-closing” charter schools. “These ‘gap-busting schools’ show that disparate student outcomes are not a foregone conclusion: people and resources can be organized to eliminate these disparities,” CREDO researchers write. “The fact that thousands of schools have done so removes any doubt.”

Typically when people talk about the “achievement gap,” they mean disparities in absolute levels of performance between, for instance, low-income and more affluent students. But that’s not how CREDO defines these gaps.

CREDO considers a “gap-busting” school one with overall achievement above the state average and where the historically disadvantaged students make similar levels of growth as more advantaged students in the same school.

A school could meet this definition without closing gaps in student outcomes, though. Research has long shown that students from low-income families, on average, enter school with lower achievement levels compared to better-off peers. That means that similar rates of growth would not eliminate disparities in performance. CREDO does not examine whether actual gaps in overall achievement had closed in the schools it defines as “gap-busting.”

“A lot of those schools where we’re not seeing a growth gap, they’re still going to have an achievement gap,” said Woodworth.

Matt Barnum is a national reporter covering education policy, politics, and research. Contact him at mbarnum@chalkbeat.org.